Image Enhancement

Image Enhancement Based on Pigment Representation

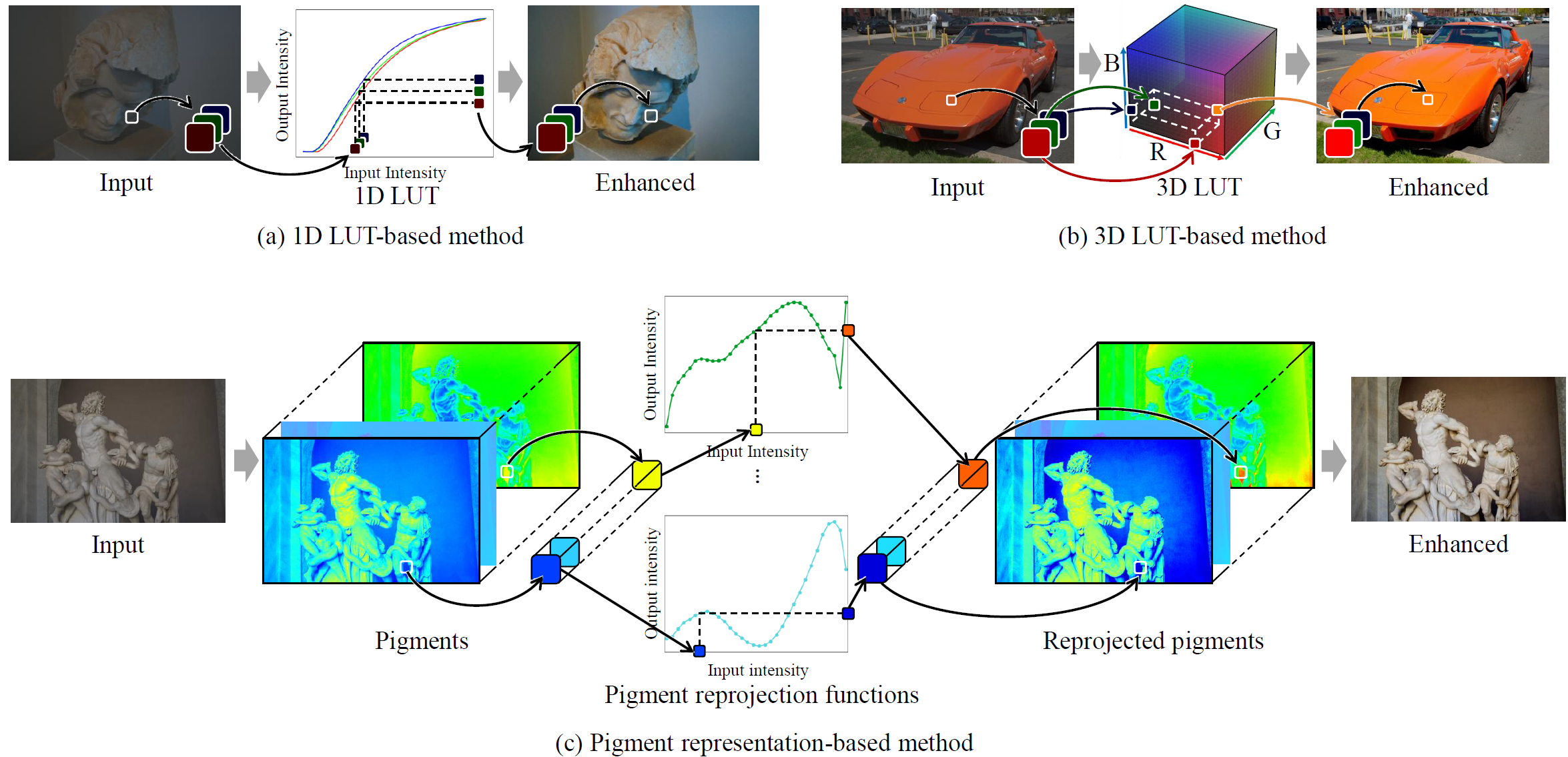

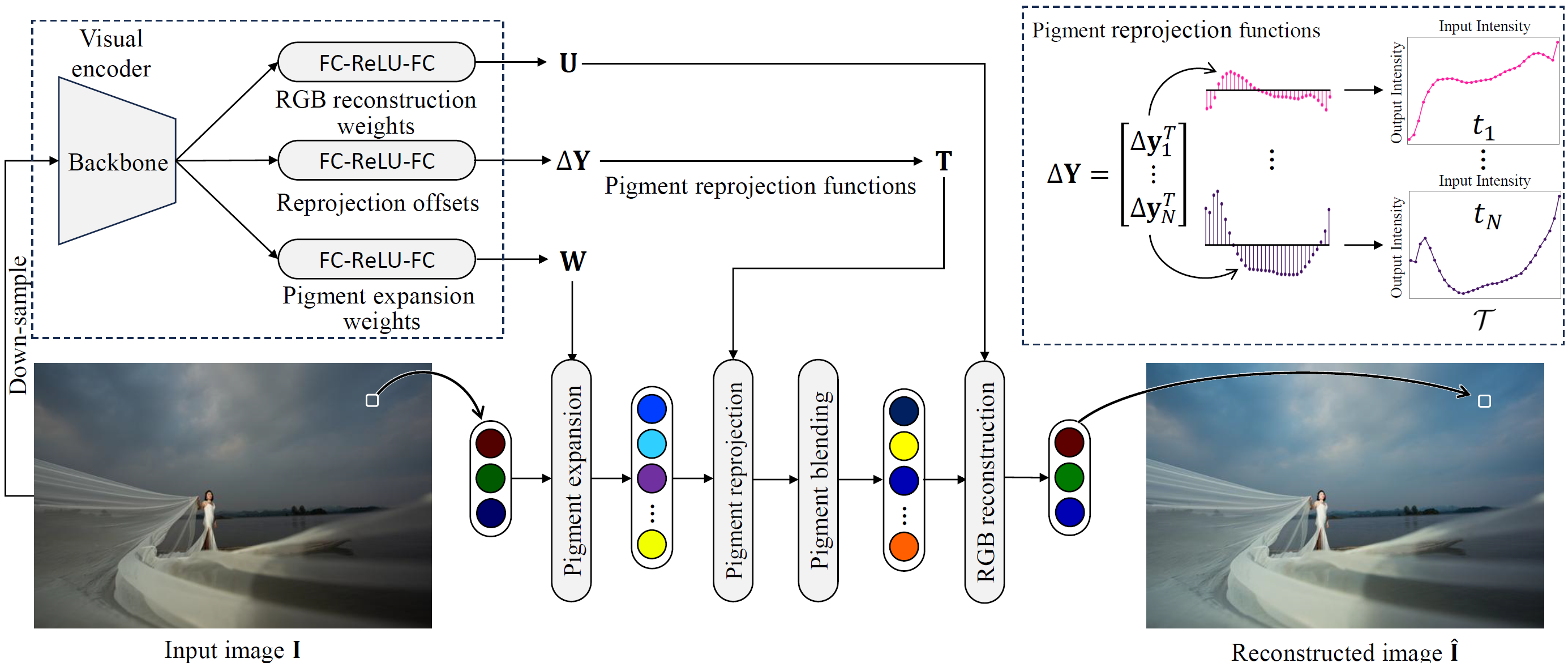

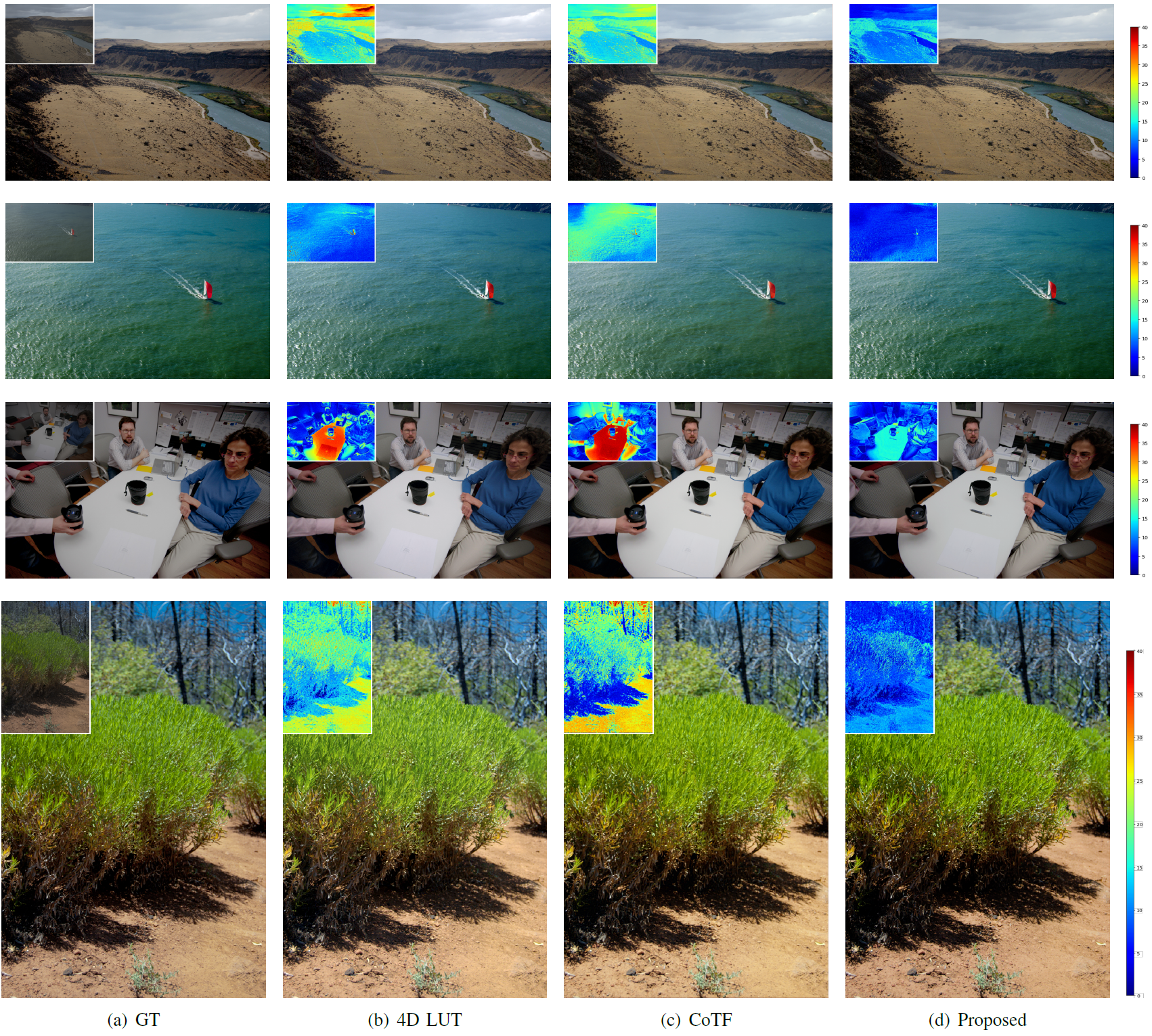

We develop deep learning-based image enhancement methods that adaptively improve visual quality across diverse conditions. Our core approach transforms input RGB colors into a high-dimensional pigment representation customized for each image, enabling complex color mappings that go beyond conventional pre-defined color spaces such as RGB or CIE LAB. The pigment-based method consists of five stages: visual encoder, pigment expansion, pigment reprojection, pigment blending, and RGB reconstruction. In parallel, we explore deformable control point networks (DCPNet) that flexibly parameterize global transformation functions per color channel, applicable to photo retouching, tone mapping, and underwater image enhancement.

| Method | PSNR ↑ | SSIM ↑ | ΔEab ↓ | # Params. | Runtime |

|---|---|---|---|---|---|

| UPE | 21.88 | 0.853 | 10.80 | 927.1K | — |

| DPE | 23.75 | 0.908 | 9.34 | 3.4M | — |

| HDRNet | 24.66 | 0.915 | 8.06 | 483.1K | — |

| CSRNet | 25.17 | 0.921 | 7.75 | 36.4K | 0.71ms |

| DeepLPF | 24.73 | 0.916 | 7.99 | 1.7M | 36.69ms |

| 3D LUT | 25.29 | 0.920 | 7.55 | 593.5K | 0.80ms |

| SepLUT | 25.47 | 0.921 | 7.54 | 119.8K | 6.20ms |

| AdaInt | 25.49 | 0.926 | 7.47 | 619.7K | 1.89ms |

| RSFNet | 25.49 | 0.924 | 7.23 | 16.1M | 7.28ms |

| 4D LUT | 25.50 | 0.931 | 7.27 | 924.4K | 1.25ms |

| HashLUT | 25.50 | 0.926 | 7.46 | 114.0K | — |

| CoTF | 25.54 | 0.938 | 7.46 | 310.0K | 4.28ms |

| Proposed | 25.82 | 0.939 | 7.15 | 765.0K | 1.43ms |

Publications

- Se-Ho Lee, Keunsoo Koh, and Seung-Wook Kim, “Image enhancement based on pigment representation,” IEEE Transactions on Multimedia, 2026. [DOI] [Project]

- Se-Ho Lee and Seung-Wook Kim, “DCPNet: Deformable control point network for image enhancement,” Journal of Visual Communication and Image Representation, vol. 104, pp. 104308, Oct. 2024. [DOI]